Various Projects in 3D Photography and Image-based Modeling and Rendering

|

Course: CS 174B - Three-Dimensional

Photography and Rendering |

Course Description

State of art in three-dimensional photography and image-based rendering. How to use cameras and light to capture shape and appearance of real objects and scenes. Process provides simple way to acquire three-dimensional models of unparalleled detail and realism. Applications of techniques from entertainment (reverse engineering and post-processing of movies, generation of realistic synthetic objects and characters) to medicine (modeling of biological structures from imaging data), mixed reality (augmentation of video), and security (visual surveillance). Fundamental analytical tools for modeling and inferring geometric (shape) and photometric (reflectance, illumination) properties of objects and scenes, and for rendering and manipulating novel views.

Image Mosaic |

|

An image mosaic is a single image constructed from many smaller images, giving the appearance that the single image was taken from a larger camera. Shown to the right are two photographs of my room, taken from the same location with only a rotation. It is a requirement that imagery to be included in the mosaic must only differ by a camera rotation. The first step is to establish points of correspondence between the photos for reference. To do this, we simply click points that "match" between both images. For instance, we could select the corner of the notebook in both images, creating a pair of corresponding image points. We need at least four point pairs to properly build the mosaic. Next we build a homography, a matrix that relates the 3D space of one image to the other. We do this by constructing a matrix from the points of correspondence and computing its singular value decomposition (SVD). |

To build the mosaic, we first create an image large enough to fit both images. Next we select one image to be the reference image that will remain unmodified (the second image from my room). We perform backward mapping, a technique which scans each pixel of the mosaic image and "maps" which source image to copy the pixel from. We are able to perform this operation with the homography computed earlier. If the pixel maps to the reference image, we copy the pixel directly (hence producing no change in the reference image). If the pixel maps to the other image, we use bilinear interpolation of the image's neighboring pixels to computer an approximate pixel value. This will remove unpleasant aliasing effects in the mosaic. Below is the image mosaic of my room. Notice the first image is warped significantly to match the second image. |

Below are more image mosaic examples, including one with six images. To handle more than two images, the process is nearly identical to two images except we must compute multiple homographies between adjacent pairs of images. In order to find a homography between any two images, we can simply multiply the homographies for all adjacent images between the two we want. |

(click image to enlarge) |

(click image to enlarge) |

(click image to enlarge) |

(click image to enlarge) |

(click image to enlarge) |

(click image to enlarge) |

(click image to enlarge) |

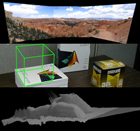

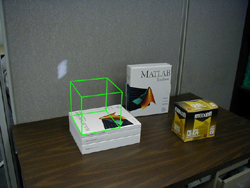

Match Moving |

|

Match Moving is a special effects technique to mathematically insert a virtual object into real imagery with correct position, zoom, and orientation of the scene. This technology is used for displaying the line of scrimmage for televised football games, as well as the advertisements that display along the field. Shown to the right are three images of a scene. The goal is to insert a virtual cube object into the scene mathematically in all three images. This operation is performed between one pair of images at a time. The first step is to establish points of correspondence between two of the images. To do this, we simply click points that "match" between both images. For instance, we could select the corner of the stack of books in both images, creating a pair of corresponding image points. We need at least eight point pairs to properly perform match moving. The next step is to calibrate the camera. Given a matrix for the camera's calibration and calculating its inverse, we convert all corresponding points from pixel space to a common image plane space. We then reconstruct the camera motion. This will compute the rotation and translation of the camera between the pair of images. This process is the most mathematically involved since we must select the correct camera rotation/translation pair from four different possible scenarios (due to the ambiguity from 2D to 3D space). This disambiguation step is known as reconstructing the geometry of the scene. Lastly we insert the virtual object into the scene. We set up a cube of 8 points in its own object space. For each image, we convert each cube point from its own space into image space and then into pixel space, using the rotation/translation pair from above. Below are the three images with the virtual cube object inserted. |

(click image to enlarge) |

(click image to enlarge) |

(click image to enlarge) |

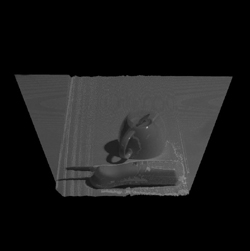

Shadow Carving |

(click image to enlarge) |

(click image to enlarge) |

(click image to enlarge) |